Casey Waldren

Hi! I work fulltime at LaunchDarkly.

Previously, I worked at Axon and Hardlight VR, and before that I studied computer science and philosophy at the University of Rochester.

- Virtual Reality

- Graphics

- Android Projects

- Misc

Hardlight VR

I'm a software developer for Hardlight VR, a virtual reality startup in Seattle, Washington. I work on the developer APIs and backend engine that powers the suit.

A critical aspect of API design is allowing the user to have feedback on their actions. When using the Hardlight API, many haptic effects may be active at once. These effects can take place all across the body, with varying strengths and waveforms. I designed and built an API to propogate this information from the lowest levels of the runtime all the way to the game developer SDKs. Using this interface, my coworker built helpful realtime visualizations.

Here, colors represent different waveform classifications, such as "click" or "bump". The opacity of the color represents the strength of the effect. Since we are a hardware startup, it's essential that we have some form of feedback for our developers when physical devices are not available.

In our runtime, lack of physical devices is not a special case. We can write configuration profiles for new or nonexistent devices, and these devices are treated the same as a real piece of hardware. As far as a game is concerned, there's no difference. At the lowest levels, this involved augmenting our hardware APIs such that all calls could be intercepted and simulated.

The Hardlight API is built on the idea that simple primitives can be combined into complex effects. One of the first abstractions we arrived at were emanations - essentially graph searches over a human body. An emanation may start at a point and move outwards, or move from point to point. Special considerations for proximity of nodes, both logically and physically, had to be taken into account. We also schemed up a few new ways of addressing the body - but that's for another post.

The creation of a haptic effect in the Unity Editor is simple. Under the hood, the data is recursively flattened into one of the Hardlight API's most basic primitives: a timeline. This timeline is then submitted to the system, and dispatched to available hardware. It's easy to get carried away with complex abstractions, but those belong in layers above the core API.

Following the idea that lower levels should be simpler and not depend on upper levels has allowed us to tear out and replace implementations while higher levels observe no differences. In an extreme case, we were able to backport a completely incompatible Hardlight API into a game by simple DLL replacement.

My coworker demonstrates an upper-body tracking prototype here, using Hardlight's tracking API. The data is ferried almost directly from the suit into Unity as fast as Unity can consume it.

When I first faced the challenge of delivering tracking data from our hardware to our game developers, I ran into a few dead-ends. Protocol choice is important here, because we want the lowest latency possible, the ability for multiple clients, and support for variable-rate polling. Finally I settled on a shared-memory solution after much research and prototyping. The Hardlight suit's tracking solves VR's arm problem - with only controllers and a headset providing tracking, kinematic solutions for the upper arms feel weird and get stuck on many edge cases. Now we can accurately display your real movement.

Images are courtesy of my excellent coworker Jonathan Palmer, who is responsible for Hardlight's Haptic Explorer and Unity tools, the subject of these gifs.Shader Series

Shaders are computer programs that run for every pixel in your screen. If you know the math, you can create anything. I'm not quite sure what I've created, but some of them are interesting (like #4, not sure what's going on there.)

The series of WebGL shaders will load when you press the button below. Don't worry if it takes a few seconds!

Academic Projects

Over the course of a semester spent in Budapest, Hungary, I created many Android apps, some C++ programs, and a C# app. First up are some of the more interesting Android apps.

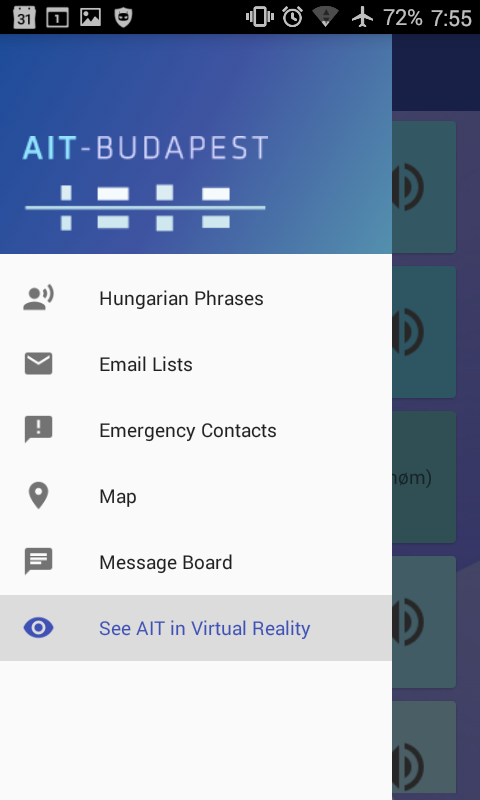

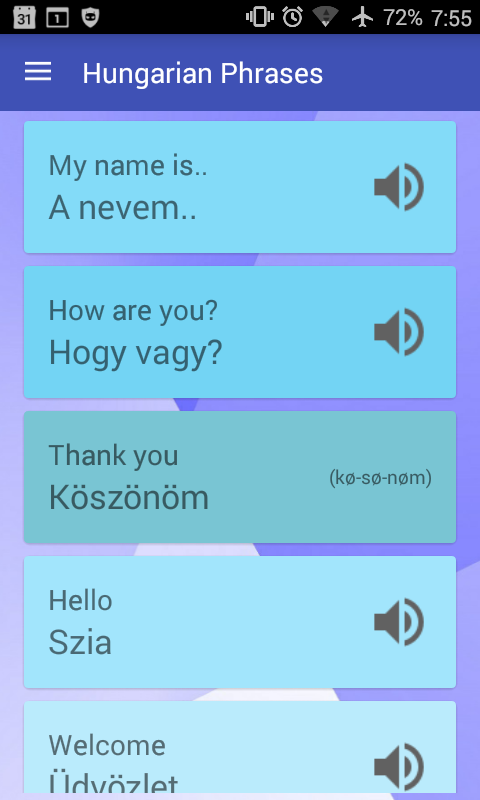

AIT App

This app is aimed at students at AIT Budapest, a small program for computer science students in Hungary.

Its functionality includes playing Hungarian phrases, viewing professors, viewing important places on a map, chatting, viewing the school in virtual reality, and quickly seeing emergency contacts.

Minesweeper

This minesweeper app uses a breadth-first search to figure out if you've hit a mine. It also features flag placing and colored numbers.

The app ended up entertaining me for quite a while, although it's not so fun to immediately die on your first turn. That's why real Minesweeper will go out of its way to move any mine out from under your mouse on your first try.

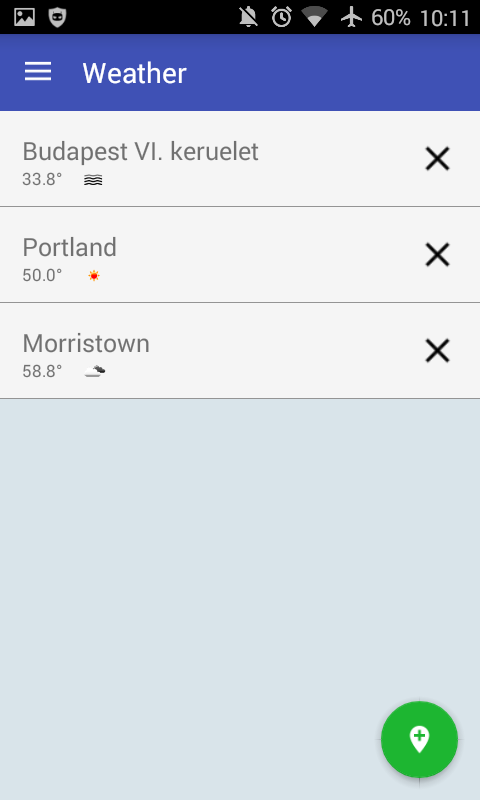

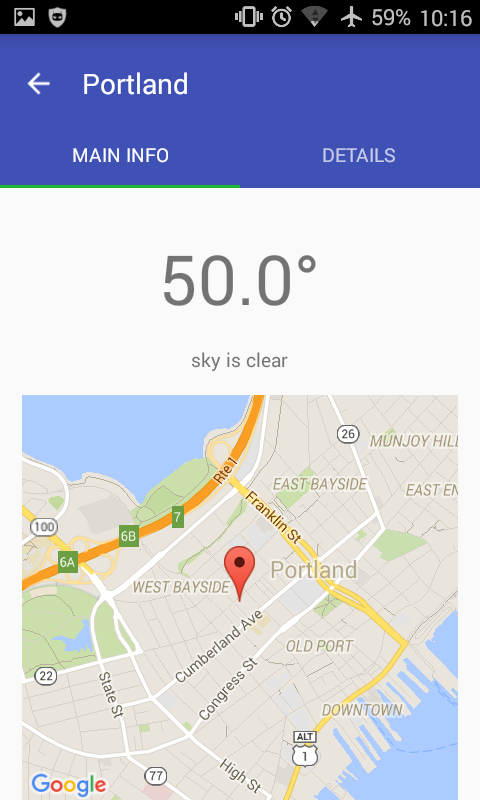

Weather App

Using an open weather API, this app allows you to fuzzy-search for a location and retrieve the current weather conditions.

It was somewhat challenging using the Google Maps API in this demo, as it involved Android fragments within fragments. I figured it out soon enough!

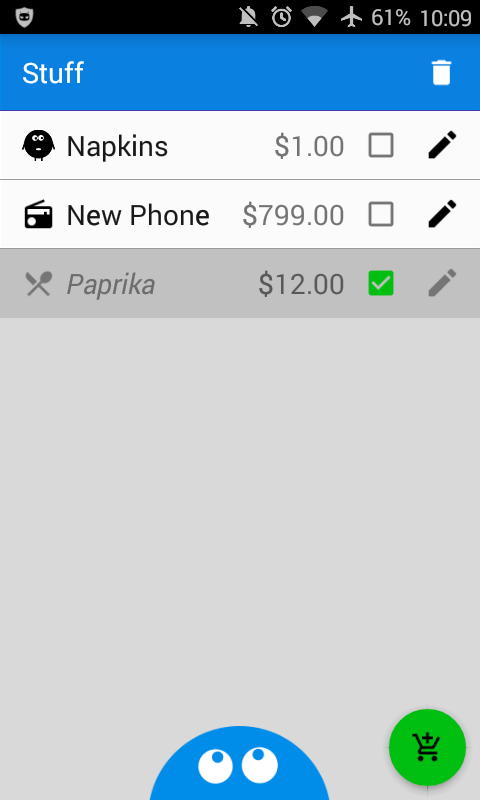

Todo App

Stuff is a simple todo app.

Key features include the recycler view for quick scrolling, as well as the ability to edit and check off todos. Todos can also be classified within a few different categories.

I especially enjoyed making a startup animation, but was frustrated by the lack of a pre-made solution for a recycler view empty state. You end up doing the same custom logic every time, although I hear this feature is in the works.

Curve Creator

This C++ program is a curve editor. It supports Lagrange curves, Bézier curves, and Catmull-Rom splines.

Also included is pixel-perfect curve selection, as well as easy selection of control points.

Flight!

Fly through the clouds and fight a giant tiger in this award-winning AAA title! (If your device doesn't support mp4, click here for giant gif.)

This program uses OpenGL to render a few low-poly models. The floor texture is constantly changed to give the illusion of motion, while the clouds and trees infinitely spawn from an object pool.

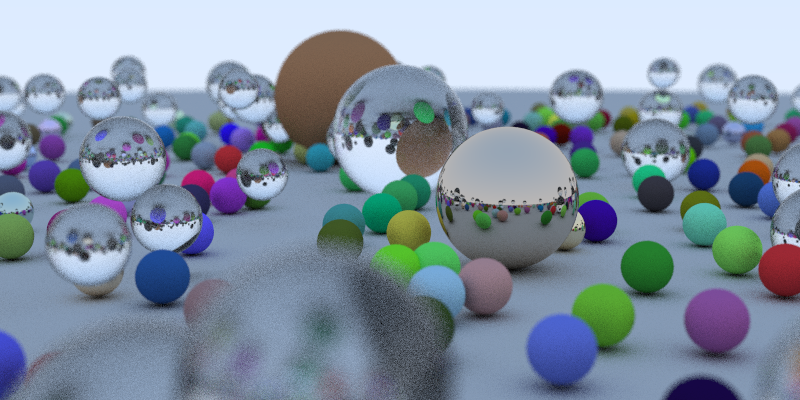

Raytracer

This image was generated by a raytracer specialized in creating chess pieces.

The source image is four times as large; it was downscaled for antialiasing purposes.

As a way of learning Rust, I ported the raytracer in Peter Shirley's book Raytracing In One Weekend.

Unfortunately graph structures are rather difficult in Rust, so the octrees will need to wait until I gain more experience.

Street Mapper

This java app maps the streets of Rochester NY, allowing you to plot the shortest path between any two points.

It's multithreaded so the UI doesn't slow down, and features a minimum spanning tree.

Crypto Storage

This group project allows a user to encrypt & upload a file to a remote Azure server.

This means a hosting company has no idea what is contained within the file. Check out Tresorit for the real deal.

Othello AI

Othello, also known as Reversi, is a game of piece-taking played by two people. I don't possess video of our AI playing, but trust me, it was quite entertaining. The AI played against a few other programs in a contest where timing mattered. This means the AI had to search as far as possible into the future in as little time as possible, relying on fast heuristics. Surprisingly, many human tactics are extremely suboptimal.

Our AI used the negamax algorithm. One group member implemented bitboards, a feature which describes the state of the game in one 64 bit integer. This allows for the storage of millions of more states than usual.

The result? An unstoppable (by us, at least!) computer.

Images

I've done various graphic design work over the years, using a combination of Photoshop, Illustrator, Inkscape, and GIMP. I also did a fair bit of 3D modeling a few years ago. Here's a link to some of the images that still exist on this webserver.